Don't Let Your AI Become Your liability

At Artura, we help organizations document how AI tools are used internally to support oversight, reduce risk, and demonstrate good-faith compliance and oversight in the case of a dispute or regulatory audit.

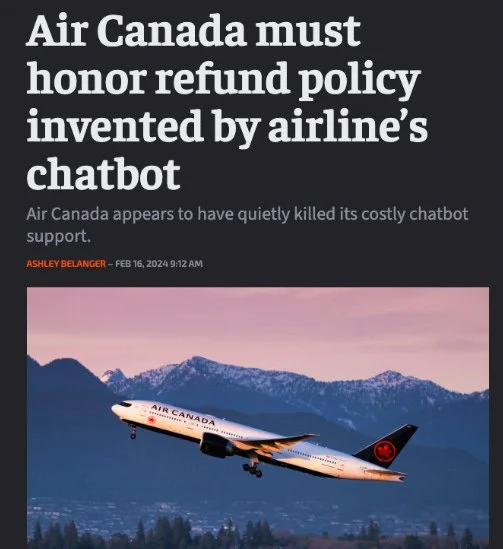

Organizations are already being held responsible for how AI is used

How can we help protect your organization?

We help organizations document how AI tools are used internally.

Using our practical documentation demonstrates responsible oversight, accountability, and good-faith compliance in the eyes of a regulatory body, government and stakeholders. All without disrupting how your teams work.

Questions about AI use are now coming from clients, insurers, and internal risk teams

Documentation is often expected before any formal policy or audit exists.

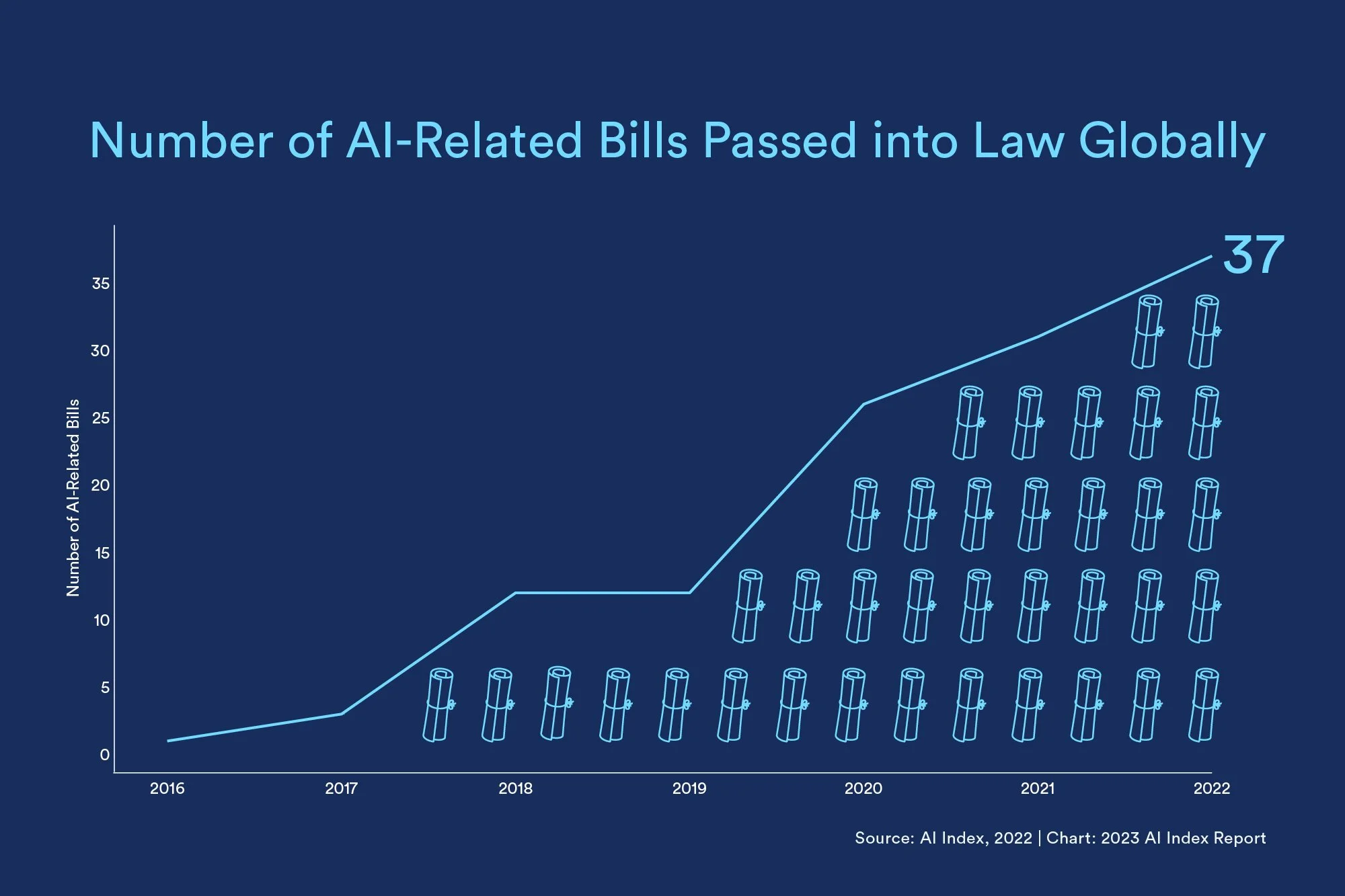

Organizations are increasingly expected to understand, manage, and stand behind how AI tools are used by their employees, even when those tools are informal, experimental, or not centrally approved.

Deliverables

Our specialized team creates professional governance frameworks aligned with NIST AI Risk Management Framework, ISO/IEC 42001, and additional industry-applicable regulatory guidance.

Our documentation helps you demonstrate good faith compliance efforts in litigation, regulatory proceedings, insurance applications, and customer due diligence.

Provides a professional record of current AI practices for internal governance, clients, or insurers.

Reduces uncertainty and demonstrates responsible oversight.

Can be completed quickly, with minimal internal effort.